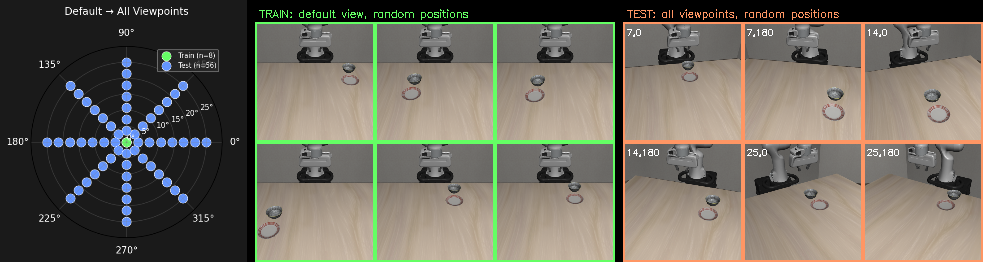

Comparing baseline (no augmentation) vs perspective augmentation, evaluated across all 64 viewpoints (8x8 spherical cap grid)

Left: polar plot showing train viewpoints (green, theta=0) vs test viewpoints (blue). Middle: sample training frames (default viewpoint, varied object positions). Right: sample test frames (varied viewpoints).

The model is trained only at the default camera angle (theta=0). At test time, the camera is moved across 64 positions on a spherical cap.

| Theta | 0.0 | 3.6 | 7.1 | 10.7 | 14.3 | 17.9 | 21.4 | 25.0 |

|---|---|---|---|---|---|---|---|---|

| Baseline | 67% | 50% | 46% | 17% | 12% | 4% | 8% | 0% |

| Persp. Aug | 54% | 33% | 38% | 21% | 21% | 0% | 0% | 4% |

| Delta | -13% | -17% | -8% | +4% | +9% | -4% | -8% | +4% |

Green = augmentation improved over baseline, red = augmentation hurt. The augmentation slightly helped at mid-range theta (10.7-14.3) but hurt at near-range (0-7.1) and didn't help at far-range (17.9-25.0).

| theta \ phi | 0 | 45 | 90 | 135 | 180 | 225 | 270 | 315 | Avg |

|---|---|---|---|---|---|---|---|---|---|

| 0.0 | 67% | 0% | 100% | 67% | 0% | 100% | 33% | 67% | 54% |

| 3.6 | 67% | 0% | 0% | 0% | 0% | 67% | 67% | 67% | 33% |

| 7.1 | 100% | 0% | 0% | 0% | 67% | 100% | 33% | 0% | 38% |

| 10.7 | 0% | 0% | 0% | 0% | 67% | 67% | 0% | 33% | 21% |

| 14.3 | 100% | 0% | 0% | 0% | 33% | 0% | 0% | 33% | 21% |

| 17.9 | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% |

| 21.4 | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% |

| 25.0 | 0% | 0% | 0% | 0% | 0% | 0% | 33% | 0% | 4% |

act_v2_exp4_n64/best.pth.

Evaluated with --teleport --zero_rotation --clean_scene --max_steps 600, random object positions per viewpoint (seed=42).

| theta \ phi | 0deg | 45deg | 90deg | 135deg | 180deg | 225deg | 270deg | 315deg | Avg |

|---|---|---|---|---|---|---|---|---|---|

| 0.0deg | 100% | 67% | 33% | 67% | 67% | 100% | 33% | 67% | 67% |

| 3.6deg | 100% | 0% | 0% | 100% | 67% | 100% | 33% | 0% | 50% |

| 7.1deg | 100% | 33% | 33% | 67% | 67% | 33% | 33% | 0% | 46% |

| 10.7deg | 0% | 33% | 67% | 0% | 33% | 0% | 0% | 0% | 17% |

| 14.3deg | 0% | 67% | 0% | 0% | 33% | 0% | 0% | 0% | 12% |

| 17.9deg | 0% | 0% | 0% | 0% | 0% | 33% | 0% | 0% | 4% |

| 21.4deg | 67% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 8% |

| 25.0deg | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 0% |

Each cell shows one eval episode at that viewpoint. Border color indicates success rate.

/data/cameron/para_normalized_losses/libero/checkpoints/act_v2_exp4_n64/best.pth

export PYTHONPATH=/data/cameron/LIBERO:$PYTHONPATH

export DINO_REPO_DIR=/data/cameron/keygrip/dinov3

export DINO_WEIGHTS_PATH=/data/cameron/keygrip/dinov3/weights/dinov3_vits16plus_pretrain_lvd1689m-4057cbaa.pth

CUDA_VISIBLE_DEVICES=4 python eval.py --model_type act \

--checkpoint /data/cameron/para_normalized_losses/libero/checkpoints/act_v2_exp4_n64/best.pth \

--benchmark libero_spatial --task_id 0 --n_episodes 3 \

--teleport --zero_rotation --clean_scene --max_steps 600 \

--shift_dx SHIFT_DX --shift_dy SHIFT_DY \

--cam_theta THETA --cam_phi PHI \

--out_dir results/act_baseline/vp_VI --save_video

python eval_full_grid.py # evaluates all 64 viewpoints, saves results/act_baseline/grid_results.json