Method

How PARA Works

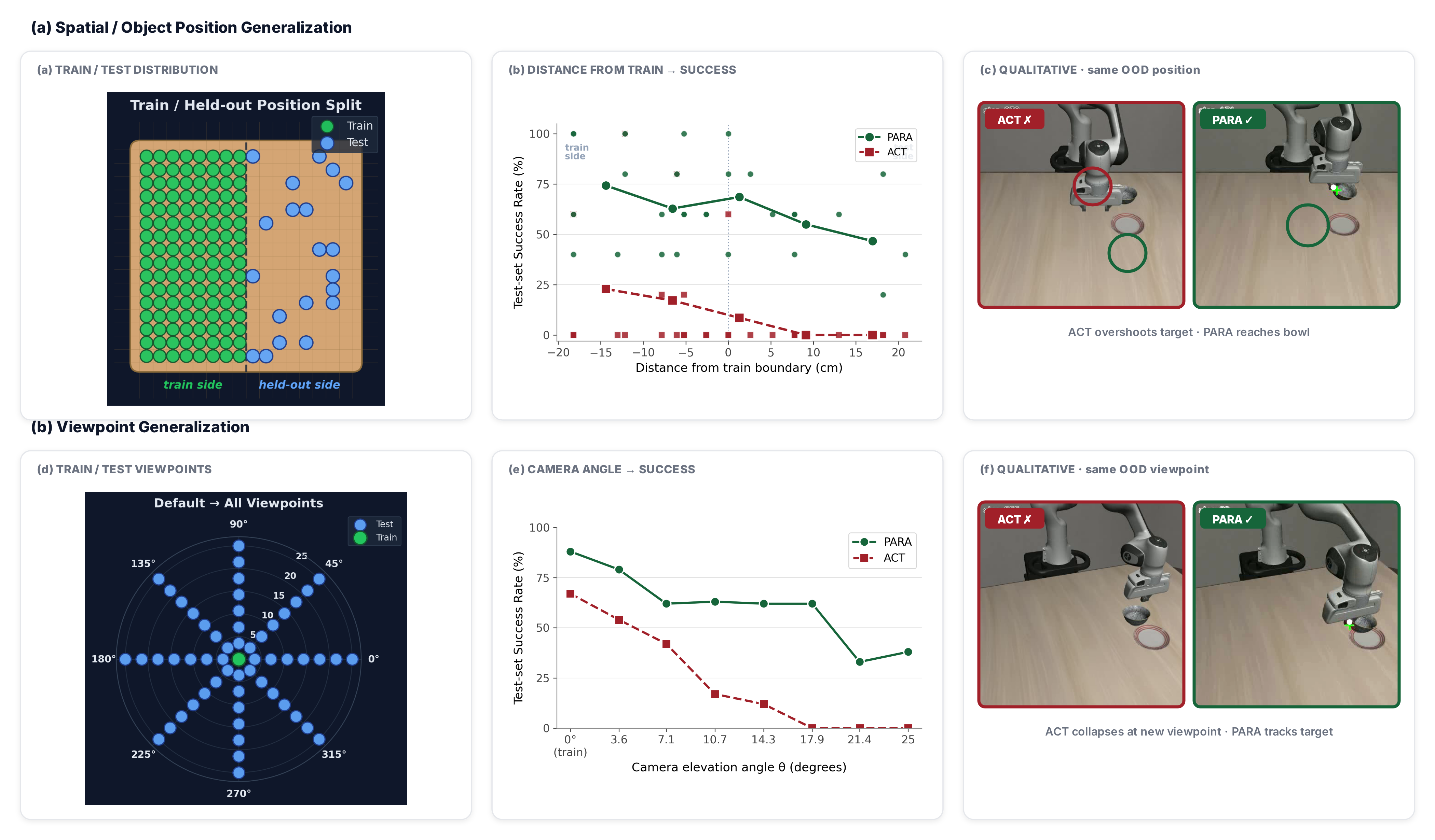

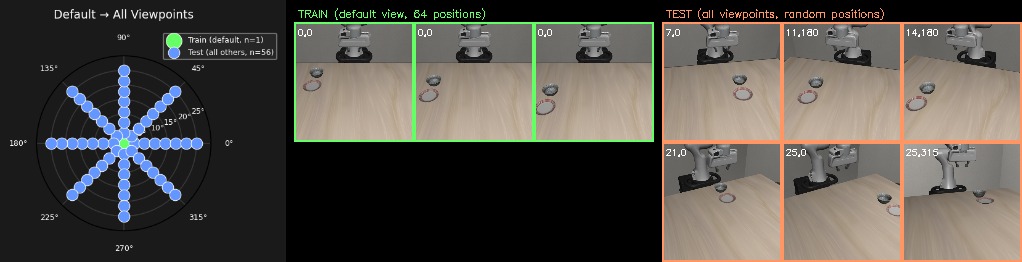

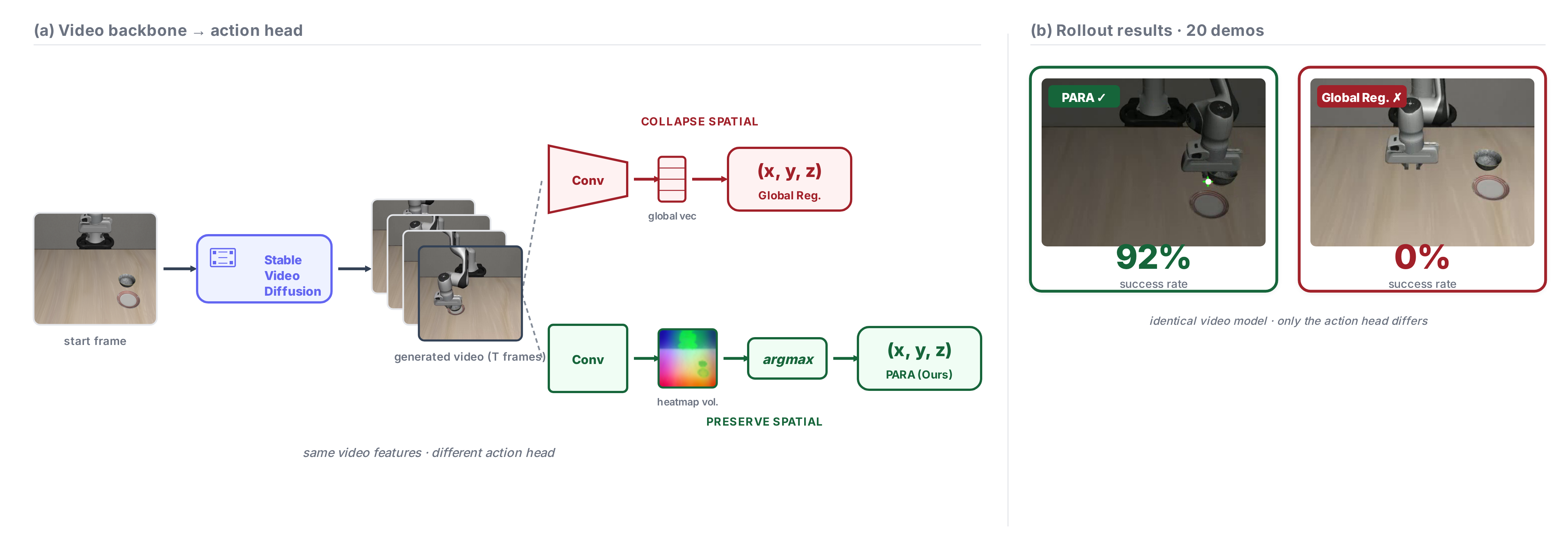

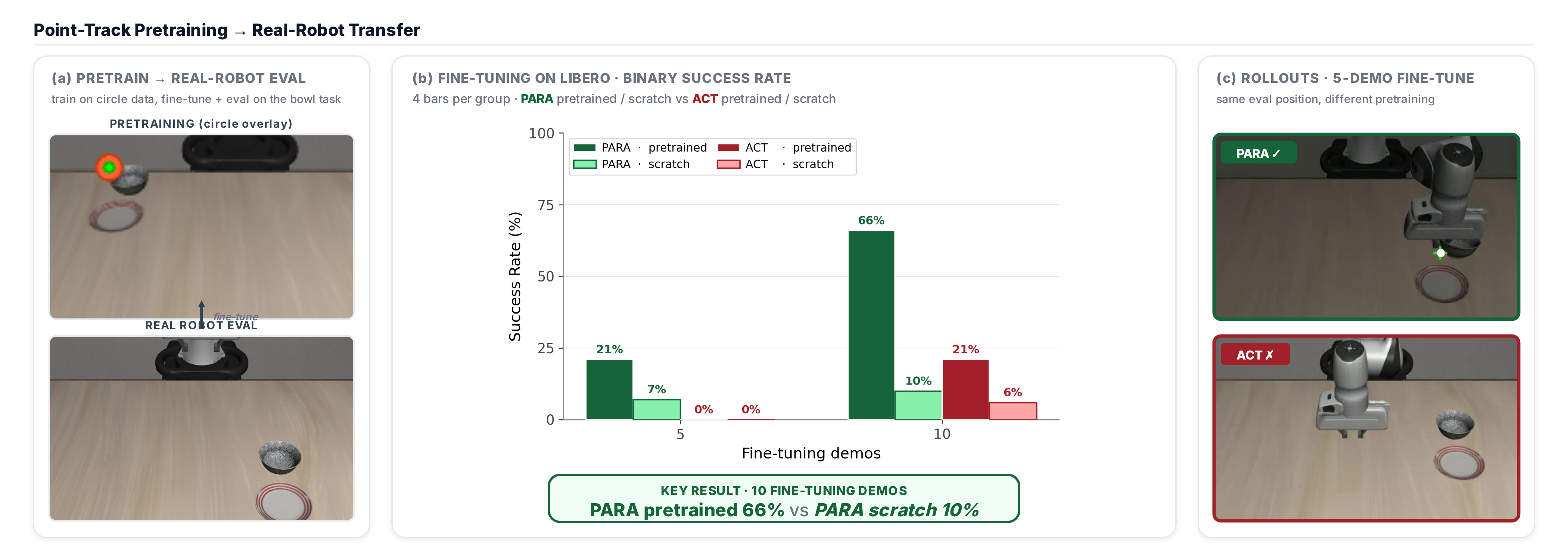

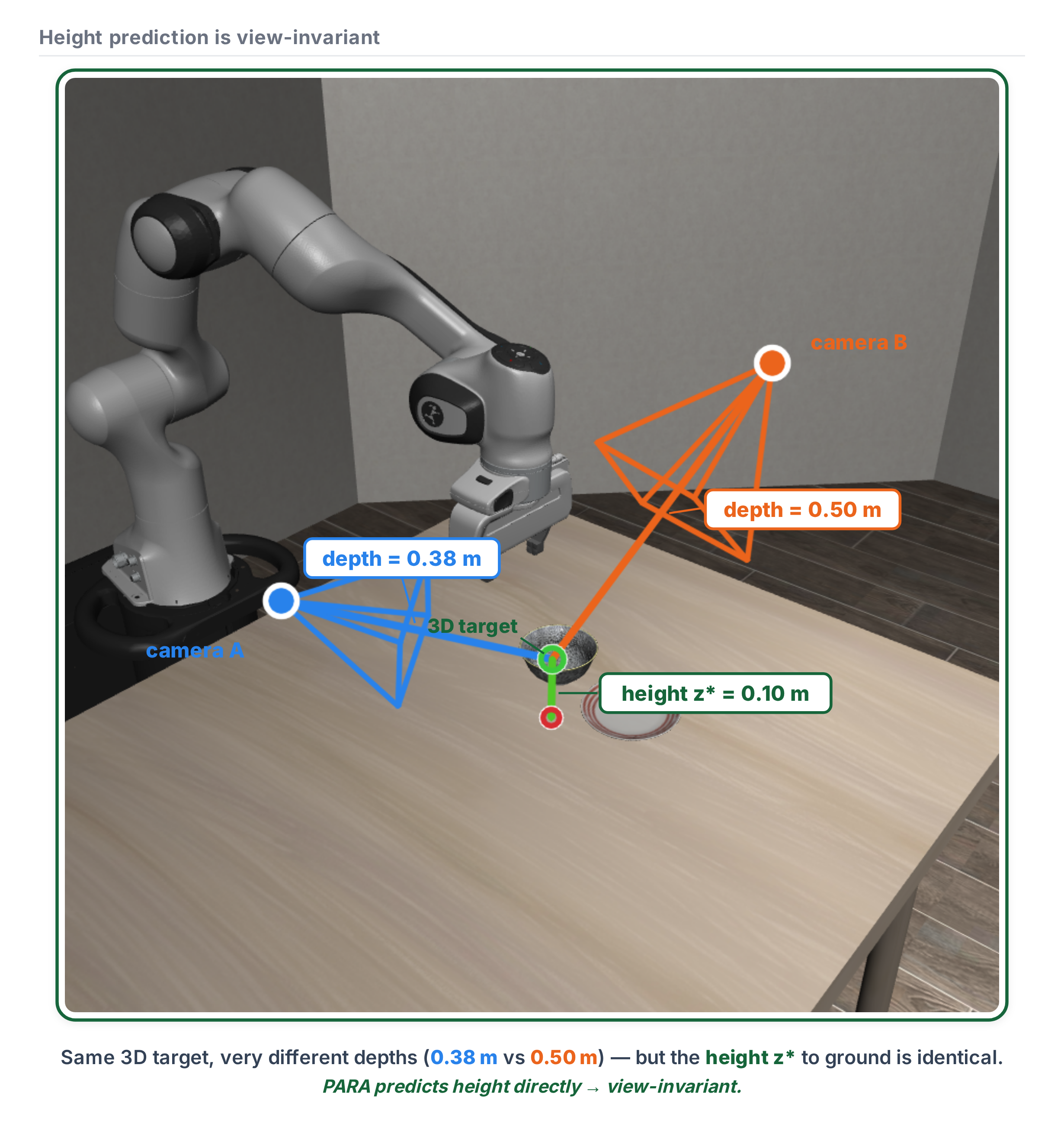

PARA decomposes end-effector action prediction into pixel localization and height estimation—two steps that are naturally equivariant to spatial and viewpoint changes.

Standard policies (e.g., ACT) regress actions from a global CLS token, forcing the model to implicitly solve correspondence, geometry, and control in an unstructured output space.

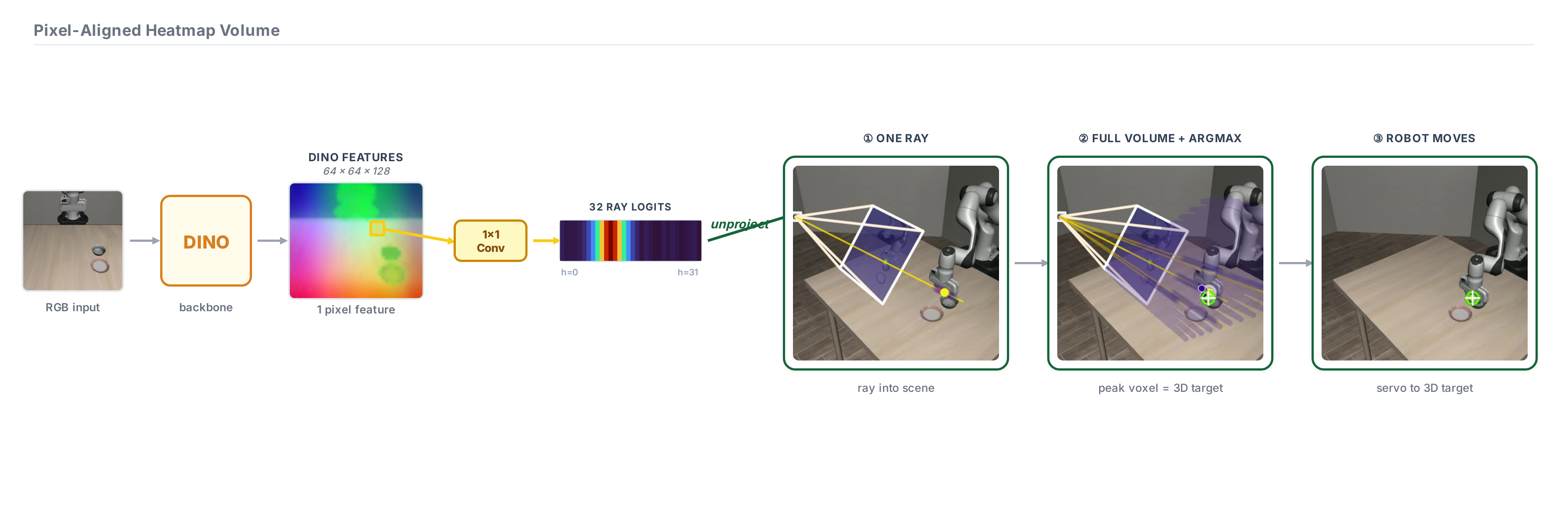

PARA decomposes this into three steps:

- Where in the image and where along the ray? Predict logits along each ray of the image to get a dense 3D heatmap volume in the camera frame. Argmax gives (u, v, z).

- 3D recovery. Given (u, v, z), recover the 3D end-effector position via camera pose and intrinsics.

Gripper open/close and rotation are predicted by indexing the feature map at the predicted pixel location.