Joint training of SVD video diffusion model + PARA action heads on LIBERO ood_objpos_task0. SVD generates video (7 frames at 576x320) while PARA heads predict pixel-aligned actions from UNet intermediate features (up_block_1 + up_block_2). Separate learning rates: UNet 1e-6 (preserves video quality), PARA heads 1e-4. Eval with teleport + zero rotation + clean scene: 0/5 success at 2000 training steps — model predicts heatmaps in correct region but needs more training.

Training Data

Dataset: /data/libero/ood_objpos_task0/libero_spatial/task_0 — 256 demos, 32 frames each. Clean scene (no distractors/furniture). Frame stride 1 (already subsampled at stride 3 from original). Video: 7 frames at 576x320. PARA: 448x448 with N_HEIGHT_BINS=32, 64x64 heatmap grid. EMA-normalized losses: volume CE + gripper CE + diffusion MSE.

Example training trajectory — ood_objpos_task0 demo 0 (clean scene, stride 3)

Example training trajectory — ood_objpos_task0 demo 10 (different object positions)

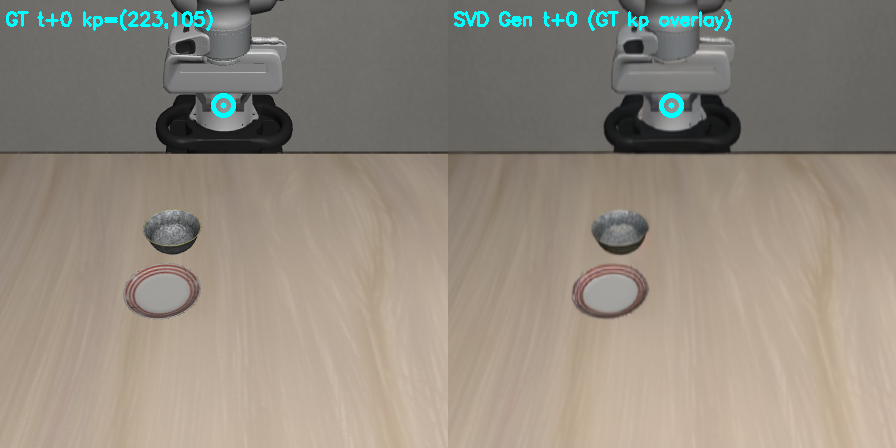

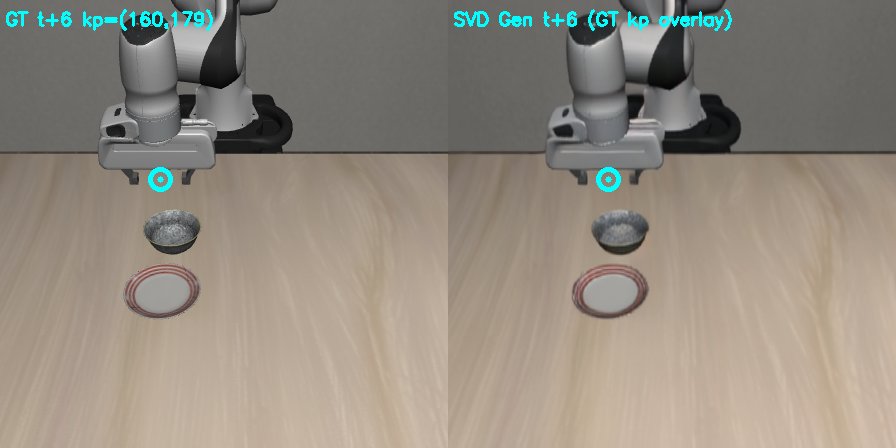

Video-PARA Alignment Verification

GT frame with GT keypoint (left) vs SVD generated (right) — aligned at t+0

Alignment at t+6 — robot arm moves, keypoint tracks EEF in both GT and generated

Test Setup

Closed-loop eval in LIBERO simulator: teleport mode (servo to predicted 3D target), zero rotation, clean scene (no distractors). 5 episodes, 600 max steps per episode. Camera: agentview, proper intrinsics from get_camera_intrinsic_matrix. Model: checkpoint-2000 from joint training (separate LRs: UNet 1e-6, PARA 1e-4).

Results

| Model | Training Steps | Success Rate | Notes |

|---|---|---|---|

| SVD+PARA Joint (ckpt-2000) | 2000 | 0/5 (0%) | Early training, heatmaps in correct region but imprecise |

Training Visualization (wandb)

GT frames with heatmap (left) vs SVD generated frames with heatmap (right). Cyan = GT keypoint, Red = predicted keypoint. Shows model learning to focus heatmaps on EEF region.

Step 200 — early training, heatmaps starting to focus

Step 2000 — heatmaps more concentrated on correct region

Example Eval Rollouts

Episode 0 — GT+heatmap (left) vs Generated+heatmap (right). Robot moves toward bowl but fails to grasp.

Episode 1 — similar pattern, heatmap concentrates on correct region

Analysis

Key findings: - SVD video generation quality preserved with separate LRs (UNet 1e-6 barely moves) - PARA heatmaps learn to focus on bowl/EEF region quickly (vol loss: 11.7 → 1-3 by step 200) - Gripper loss converges fast (3.4 → 0.1-0.5) - 0% eval success expected at 2000 steps — heatmaps are in the right area but not precise enough - Video-PARA temporal alignment verified: GT keypoints match GT frames, SVD generated video aligns with GT trajectory - Architecture: up_block_1 (1280ch→128) + up_block_2 (640ch→128) → concat 256ch → 3x conv → PARA heads at 64x64 Potential issues: - Camera intrinsics were initially hardcoded (fixed to use proper get_camera_intrinsic_matrix) - NCCL P2P must be disabled on this machine for multi-GPU (NCCL_P2P_DISABLE=1)

Next Steps & Concerns

Next steps: - Train longer (10K-50K steps) and re-eval - Compare with frozen-backbone PARA (detach features, no joint training) as baseline - Compare with DINO backbone PARA on same dataset for apples-to-apples - Sweep separate LR ratios (currently 100:1 PARA:UNet) - Add rotation prediction (currently zero rotation) Concerns: - 576x320 video resolution introduces aspect ratio distortion from 448x448 training images - SVD video model was originally trained on parsed_libero, then fine-tuned on ood_objpos — domain gap may remain - Denoising noise level affects feature quality — currently extracting from random training noise level, not controlled

Reproducibility

# Joint training (4 GPUs: 0,3,5,8)

cd /data/cameron/vidgen/svd_motion_lora/Motion-LoRA

bash train_joint.sh

# Eval (clean scene, teleport, zero rotation)

CUDA_VISIBLE_DEVICES=4 python eval_joint.py \

--checkpoint output_svd_para_joint/checkpoint-2000 \

--n_episodes 5 --clean_scene --max_steps 600